The B(r)and is breaking up. How music services like Spotify are killing artist brands.

Many of us love music streaming services like Spotify and Pandora.

We find it easy and comforting to have the music we love streaming in the background while lounging or working around the house.

What’s also great about it. Through analyzing our music selections and feedback, the music services are using AI and machine learning to get better and better at predicting what we like.

As a result, they are able to really hone in on playing the type of music that we want to hear.

As one friend mentioned about one of the streaming services, it “feels like they know me.”

While the streaming music services may “know us” and are savvy at delivering songs that we like to listen to, here’s something that you may end up not knowing: the name of the artist or song you are listening to.

You may not realize it, but the streaming services that we are increasingly listening to through our voice-UI devices like Alexa, Apple’s HomePod and Google Home are slowly divorcing us from the metadata (data that describes other data) around our music content.

Anyone familiar with these devices will know that any interaction with these devices either exists through your voice requests or via the audio the device delivers in response. No graphics or visual content is presented.

So while you hear a song, metadata such as the artist, the artist’s label and product information, like the album cover, get ignored or made invisible to users without a request by the users for that specific information.

Nice song. What’s the name? Who’s the artist?

Unless the user is already familiar with the artist or song, the user is essentially blind to artist information they hear on many streaming services. Instead, they are only able to see what their ears hear: the song. Not the artist metadata, like the album cover, artist picture, etc.

That metadata makes a difference for identity and brand.

Remember what music was like pre-streaming or if you had digital music that had metadata built into the music file? If you do, you probably realized you gained an extra connection to parts of an artist through that data.

AC-DC’s bold logo on their album covers or iconic covers like Nirvana’s baby-swimming-in-a-pool Nevermind album gave identity and a visually memorable brand context around their music.

A visceral connection because the images around an artist you listen to often become so closely tied with the moments you experienced with the music. For that not to happen would be like going to the senior prom and not remember what anyone wore or the decorations. Just the songs. Not likely. They’re inseparable.

Yet in streaming music and metadata they’re being separated.

Your interaction with the music through a streaming device is usually about telling the voice UI what you want to hear. In playback, the service rarely tells you who the artist is. Unlike radio, there is rarely a DJ telling you that the song was “Closer” by Halsey to help brand the music experience you just had or are about to have.

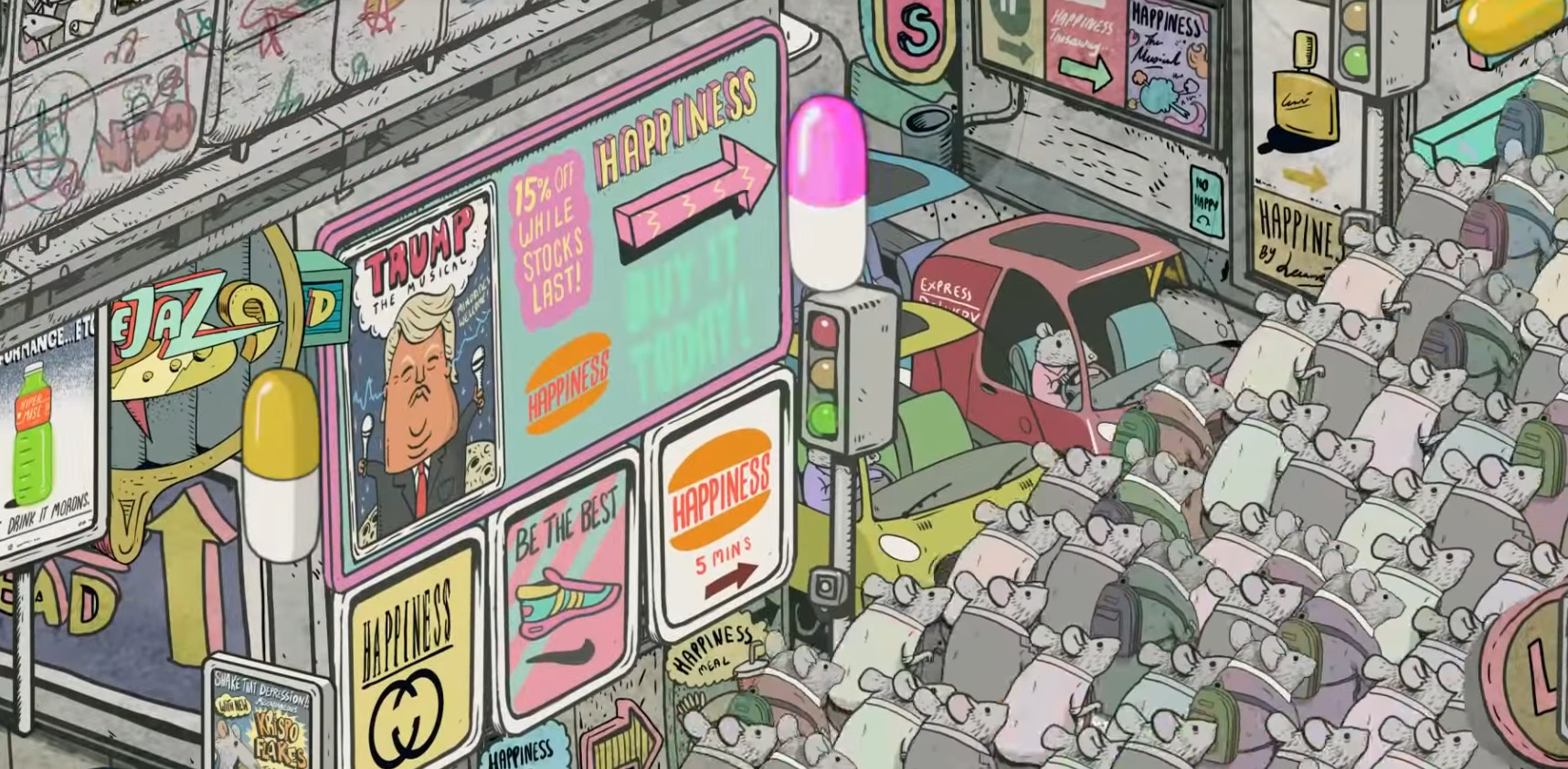

So with little exposure to branding cues, your music service is adding new artists that you like into your feed without a second thought or glance by you. Or without those special, brand-able connections.

Soon, the ratio of great songs you are discovering may soon begin to outweigh the contextual information that you know about them. Unless you ask Alexa or use a music scanner like Shazam, the song and artist are invisible.

On streaming services, artists make percentages of a penny in royalties for each song you listen to. For many money isn’t in streaming. It’s in touring and merchandising. Two things where a good brand is extremely valuable.

So for artists who need their name and brand recognized in order to promote and make money in merchandise and on the road, losing their identity via streaming services could be a problem.

Sad song goes here. I don’t care which artist. Just play something I like, Alexa.